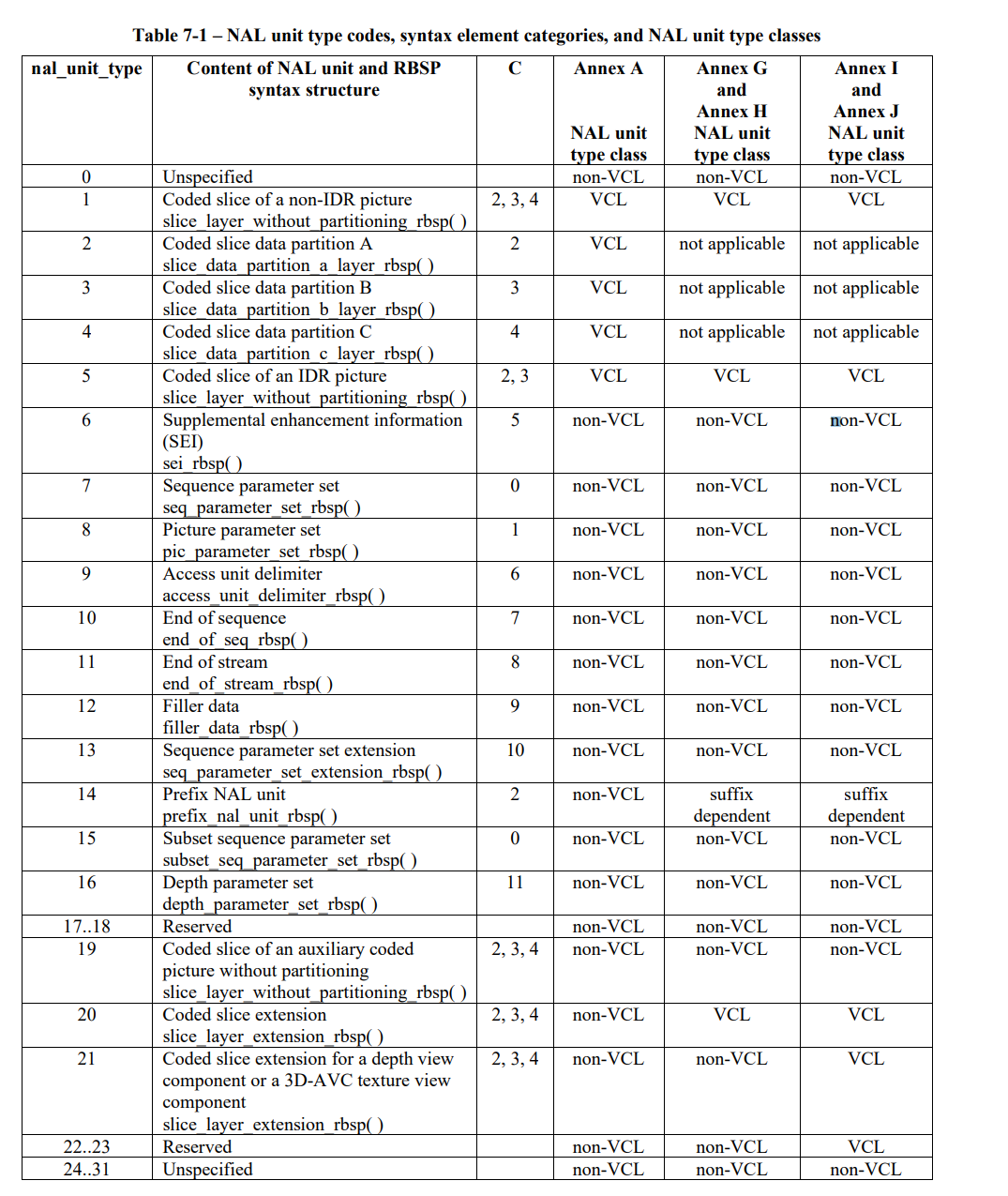

Network Abstraction Layer Unit Types

- F : 1bit (forbidden_zero_bit)

- 1이면 위반

- NRI : 2bits (nal_ref_idc)

- 00은 NAL 유닛의 reference picture를 재구성 하는데 사용되지 않음을 나타냄

- Type : 5bits (nal_unit_type)

NAL unit payload type. 3바이트 또는 4바이트 Start Code 0x000001, 0x00000001이 앞단에 붙어 NAL Type을 표시한다.

NAL unit syntax

emulation byte:0x03이다.emulation byte는0x00 0x00이 연속되면0x03을 삽입하여0x00 0x00이start code로 인식되는 것을 방지한다.

SPS

sequence parameter set: A syntax structure containing syntax elements that apply to zero or more entire coded

video sequences as determined by the content of a seq_parameter_set_id syntax element found in the picture

parameter set referred to by the pic_parameter_set_id syntax element found in each slice header.

VUI (Video Usability Information)

PPS

- chroma_qp_index_offset:

- specifies the offset that shall be added to QPY and QSY for addressing the table of QPC values

for the Cb chroma component. The value of chroma_qp_index_offset shall be in the range of −12 to +12, inclusive

- specifies the offset that shall be added to QPY and QSY for addressing the table of QPC values

Profile

High Profile

Baseline Profile

Level

level = level_idc / 10Color Bit-depth

몇개의 색을 나타낼 수 있는지

EX) 8bit -> 256개

Color depth refers to the maximum number of colors an image can contain. Color depth is determined by the bit depth of an image (the number of binary bits that define the shade or color of each pixel in a bitmap). For example, a pixel with a bit depth of 1 can have two values: black and white. The greater the bit depth, the more colors an image can contain, and the more accurate the color representation is.

For example, an 8-bit GIF image can contain up to 256 colors, but a 24-bit JPEG image can contain approximately 16 million colors.

Usually, RGB, grayscale, and CMYK images contain 8 bits of data per color channel. That is why an RGB image is often referred to as 24-bit RGB (8 bits x 3 channels), a grayscale image is referred to as 8-bit grayscale (8 bits x channel), and a CMYK image is referred to as 32-bit CMYK (8 bits x 4 channels).

Regardless of how many colors an image contains, the image display is limited to the highest number of colors supported by the monitor on which it is viewed. For example, an 8-bit monitor can display only up to 256 colors in a 24-bit image.

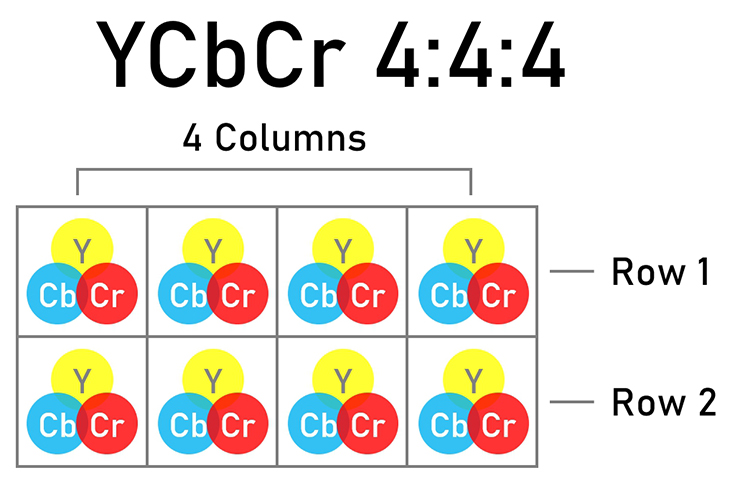

Chroma Format

- Chroma format: digital images를 저장하는 방식, RGB, YUVRGB VS YUV

- RGB: digital colour image display, where different colours are formed by combining the three primary colours of light—red, green, and blue (RGB)—in different proportions.

- YCbCr: YCbCr uses three different signals that, when combined, can replicate a colour image

- Luminance (brightness) - Y

- Blue colour difference - Cb

- Red colour difference - Cr

- The camera generates an RGB image from the light received by the image sensor.

- The RGB image is encoded into a YCbCr signal, which is what is recorded and transmitted to viewing devices.

- The display device (computer, TV set or monitor) decodes the YCbCr signals and converts them back into RGB for display.

Chroma subsampling and data compression

Chroma (colour) subsampling:

- method that makes use of this to reduce the colour information

- when converting RGB files to YCbCr signals to enable smaller data files.

- 4:2:2 and 4:2:0 refer to different methods of chroma subsampling.

How does it work?

- 8 pixels in a 4×2 array에서 Colour sampling 이 발생

- The luminance (Y) channel for all 4 columns of pixels is recording, hence the first number “4”.

- The second number indicates the number of colour difference signals (CbCr) recorded from the first row of pixels.

- The third number indicates the number of colour difference signals (CbCr) recorded from the second row of pixels.

- Uncompressed YCbCr 4:4:4

- When the CbCr information from all pixels is recorded (no unsampled pixel), the chroma subsampling is indicated as 4:4:4 (no subsampling).

- This provides the highest quality—RAW files are equivalent to 4:4:4. However, it also results in large files.

- For smaller file sizes, we need to lose some colour information to compress the file. One method is through sub-sampling, where the information from some pixels is not recorded.

FPS

- FPS: Frame Per Second: 1초 동안 보여주는 화면의 수

MaxFPS = Ceil( time_scale ÷ ( 2 * num_units_in_tick ) )

위의 내용을 정리하면

- num_units_in_tick: clock tick 의 time unit 수

- time_scale: 1초에 pass 하는 time unit의 수

- clock tick(C-1) : coded data에서 측정될 수 있는 최소 시간 간격

tc = num_units_in_tick ÷ time_scale

frame rate 이 1초 동안 보여주는 화면의 수 이니까,1sec = tc * fps 라고 가정했을 때,

1sec = tc * fps

fps = 1sec / tc

fps = 1sec / (num_units_in_tick / time_scale)

fps = time_scale / num_units_in_tickfps = time_scale / num_units_in_tick 이 식을 도출해내고 , 위의 노란색 부분에서 fps가 30 000 ÷ 1001 Hz 일때,

time_scale이 30,000 이어야 하지 않나 라고 생각했는데, 문서에서는 60,000이라고 해서 혼란스러웠다.

위의 자료를 통해 의문점이 해결되었다

위의 자료를 통해 의문점이 해결되었다

time_scale 과 num_units_in_tick는 vui_parameter (Annex E 에서 자세하게 볼 수 있다.) 에서 구할 수 있다.

324.p - Maximum frame rates (frames per second) for some example frame sizes

- bitrate: 1초의 영상을 구성하는 데이터 크기

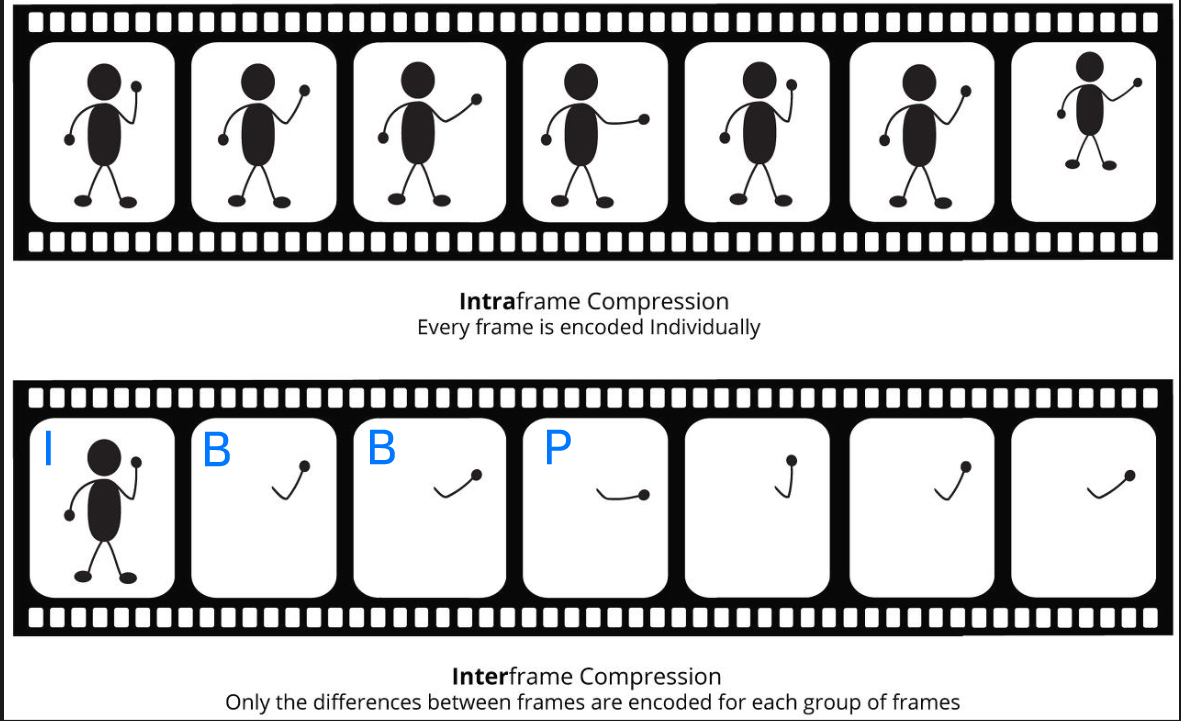

GOP(Group of Pictures)

- 여러장의 이미지를 하나로 그룹화해서 압축하는 방식.

- 3종류의 프레임이 있음.

POC(Picture Order Count)

- defines display order of AUs in the encoded bitstream.

- According to H264, standard POC is derived from slice headers proportional

to AUs timings starting from the first IDR AU where POC is equal to 0. - POC is defined in the H264 standard by TopFieldOrderCount and BottomFieldOrderCount

DTS, PTS

DTS(Decoding Time Stamp)란?

- 플레이어가 디코딩 작업에 앞서 영상 데이터 스트림을 수신할 때, 일반적으로 B프레임이 포함된 영상인 경우,

I프레임과 P프레임이 먼저 들어오고 그 후에 B프레임이 들어옵니다. I->P->B - 재생 전에 디코딩이 이뤄질 때는 먼저 수신된 데이터의 순서대로 처리하기에

이 디코딩 작업 순서로 표현된 프레임의 시각을 표현한 타임스탬프입니다. - B프레임의 경우 수신되자마자 디코딩과 재생이 이뤄지기에 B프레임의 경우는 DTS와 PTS값이 동일합니다.

- 그래서 B프레임에는 DTS는 생략되고 PTS값을 DTS값으로 사용합니다.

DecodingTime = SegmentTimelineInitialTime + (BaseMediaDecodeTime + Σ(SampleDuration))/timescalePTS(Presentation Time Stamp)란?

- 영상 및 음성의 싱크(동기화)를 위해 재생 시간을 표현한 타임스탬프입니다.

- 하지만 B프레임이 포함된 영상의 경우 실제 영상 재생 시 플레이어가 I프레임 P프레임을 우선으로 수신한 순서와 다르게 I, B, B, P 순서이기에

- 이 경우(바로 디코딩 및 재생되는 B프레임만 제외하고) DTS와 PTS의 값은 아래 이미지처럼 상이합니다.

PresentationTime = DecodingTime + CompositionTimeOffset)/timescale

TopFieldOrderCnt / BottomFieldOrderCnt:(8.2.1 Decoding process for picture order count)

- indicate the picture order of the corresponding top field or bottom field relative to the first output field of the previous IDR picture or the previous reference picture including a memory_management_control_operation equal to 5 in decoding order

- derived by invoking one of the decoding processes for picture order count type 0, 1, and 2 in subclauses 8.2.1.1, 8.2.1.2, and 8.2.1.3, respectively

- When the current picture includes a memory_management_control_operation equal to 5,

- after the decoding of the current picture,

- tempPicOrderCnt = PicOrderCnt( CurrPic ),

- TopFieldOrderCnt of the current picture (if any = TopFieldOrderCnt − tempPicOrderCnt,

- BottomFieldOrderCnt of the current picture = BottomFieldOrderCnt − tempPicOrderCnt.

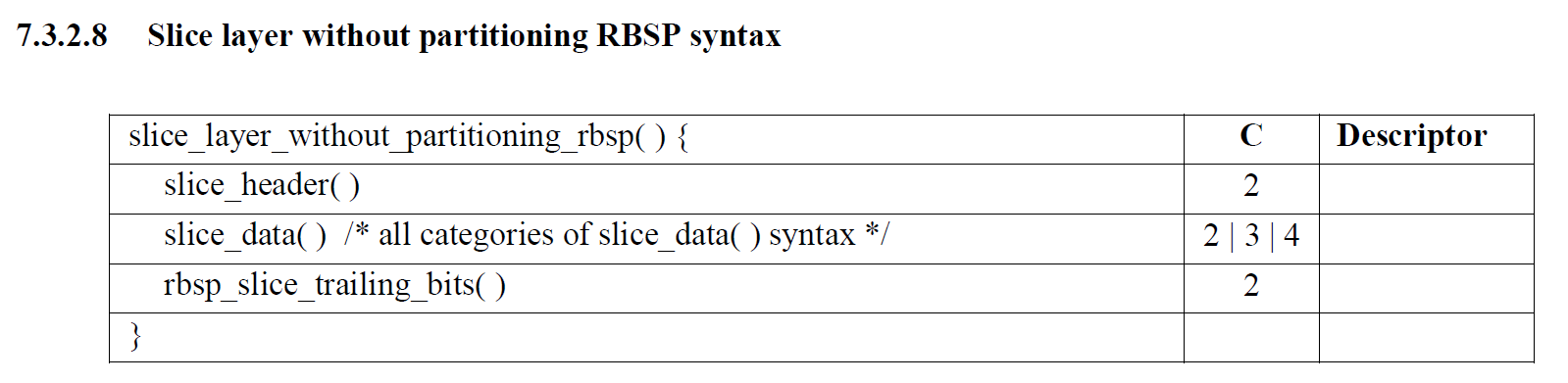

Syntax

bottom_field_flag

- bottom_field_flag == 1 : the slice is part of a coded bottom field. bottom_field_flag equal to 0 specifies

- bottom_field_flag == 0 :the picture is a coded top field

- When this syntax element is not present for the current slice, it shall be inferred to be equal to 0

field_pic_flag

- field_pic_flag == 1 : the slice is a slice of a coded field

- field_pic_flag == 0 : the slice is a slice of a coded frame.

- When this syntax element is not present for the current slice, it shall be inferred to be equal to 0

| nalu_type | value | syntax structure |

|---|---|---|

| 1 | non-IDR | slice_layer_without_partitioning_rbsp( ) |

| 5 | IDR | slice_layer_without_partitioning_rbsp( ) |

| 20 | Coded slice extension | slice_layer_extension_rbsp( ) |

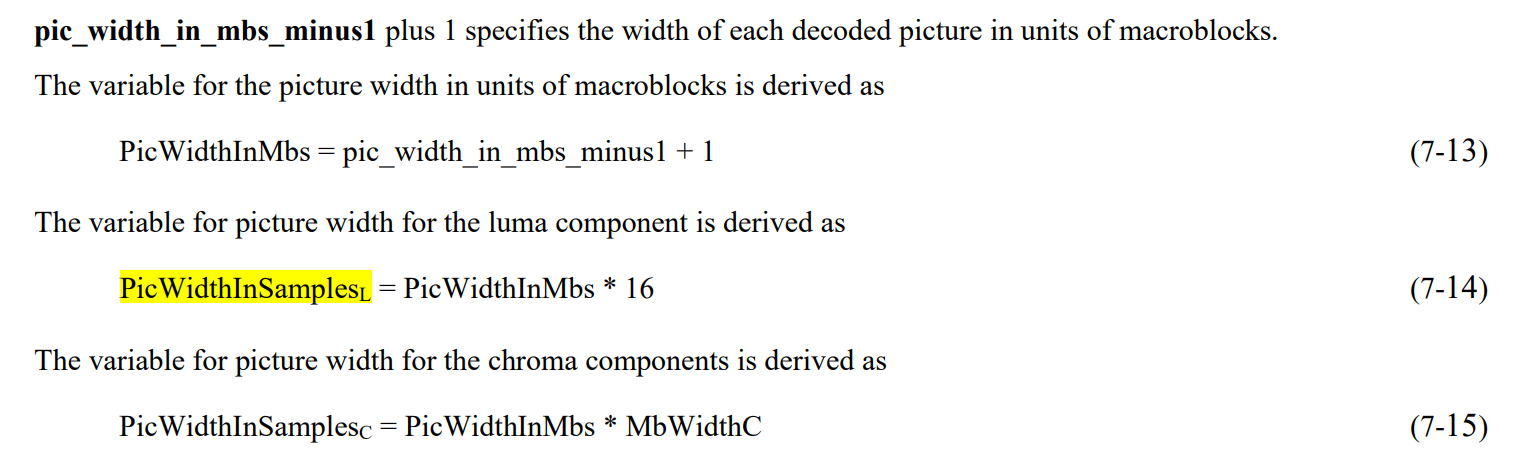

Resolution

해상도 구하기 위한 parameters

공식 정리

| Parameter | value |

|---|---|

| PicWidthInMbs | pic_width_in_mbs_minus1 + 1 |

| PicHeightInMapUnits | pic_height_in_map_units_minus1 + 1 |

| FrameHeightInMbs | ( 2 − frame_mbs_only_flag ) * PicHeightInMapUnits |

| horizontal frame | PicWidthInSamplesL − ( CropUnitX * (frame_crop_right_offset + frame_crop_left_offset) ) |

| vertical frame | FrameHeightInMbs * 16 − ( CropUnitY * (frame_crop_bottom_offset + frame_crop_top_offset) ) |

Tools

| FFmpeg, FFprobe

- NAL Infos

ffprobe -show_streams -i video.mp4 ffprobe -show_frames input.h264 | findstr pict_typeffmpeg -i video.h264 -c copy -bsf:v trace_headers -f null - 2>&1 > naluInfo.txt

Definitions

- instantaneous decoding refresh (IDR) picture: Reference이미지를 사용하지 않고 독자적으로 디코딩될 수 있는 Intra

코딩만 수행하는 I Slice나 SI Slice를 나타낸다 - ue(v): unsigned integer Exp-Golomb-coded syntax element with the left bit first. The parsing process for this

descriptor is specified in clause 9.1- parsing process:

- reading the bits starting at the current location

- up to and including the first non-zero bit

- counting the number of leading bits that are equal to 0

- parsing process:

- f(n): fixed-pattern bit string using n bits written (from left to right) with the left bit first. The parsing process

for this descriptor is specified by the return value of the function read_bits( n ).

codeNum = 2^leadingZeroBits − 1 + read_bits( leadingZeroBits )leadingZeroBits = −1

for( b = 0; !b; leadingZeroBits++ )

b = read_bits( 1 )

- RBSP: Raw Byte Sequence Payload

- HRD : Hypothetical Reference Decoder

- 가상의 기준 디코더

- 비디오 압축의 비트레이트를 조절하는 데 사용

- num_units_in_tick (doc.458) : the number of time units of a clock operating at the frequency time_scale Hz that corresponds to

one increment (called a clock tick) of a clock tick counter. num_units_in_tick shall be greater than 0. A clock tick is the

minimum interval of time that can be represented in the coded data. For example, when the frame rate of a video signal is

30 000 ÷ 1001 Hz, time_scale may be equal to 60 000 and num_units_in_tick may be equal to 1001. See Equation C-1. - time_scale (doc.458) : the number of time units that pass in one second. For example, a time coordinate system that measures time

using a 27 MHz clock has a time_scale of 27 000 000. time_scale shall be greater than 0 - category :

- syntax element의 category를 나타냄

- slice data partitioning process에서 사용됨

- class of syntax elelment

- 2: slice data partition A

- 3: slice data partition B

- 4: slice data partition C

- coordinate from a to b: a에서 b로 좌표를 맞추다

- To “crop” an image is to remove or adjust the outside edges of an image (typically a photo)

to improve framing or composition, draw a viewer's eye to the subject,

or change the size or aspect ratio - CBR: constant bit rate

- CPB: coded picture buffer

- DPB: decoded picture buffer

- DUT: decoder under test

- HRD: hypothetical reference decoder

- HSS hypothetical stream scheduler

- IDR instantaneous decoding refresh

- MB macroblock

- MBAFF macroblock-adaptive frame-field coding

- MFC multi-resolution frame compatible stereo coding

- MSB most significant bit

- MVC multiview video coding

- MVCD multiview video coding with depth

- NAL network abstraction layer

- RBSP raw byte sequence payload

- RPU reference processing unit

- SEI supplemental enhancement information

- SODB string of data bits

- SVC scalable video coding

- UUID universal unique identifier

- VBR variable bit rate

- VCL video coding layer

- VLC variable length coding

- VSP view synthesis prediction

- VUI video usability information

- 3D-AVC multiview video coding with depth as specified in Annex I

Refs

- ISO_IEC_14496-10_2020(AVC)

- H264/AVC architcture, profile and level

- H264_Analysis_Tools

- nal-units-and-parameter-sets-in-h-264

- RTP Payload Format for H.264 Video

- RTP분석

- H.264 Stream 분석

- HLS.js

- Chroma Format Explanation

- the-h-264-sequence-parameter-set

- 미디어 용어(코덱 / GOP / 비트레이트 / 프로파일 / 레벨)

- GOP설명

- DTS, PTS 설명

'Media' 카테고리의 다른 글

| Llama - Low Latency Adaptive Media Algorithm (0) | 2024.05.11 |

|---|---|

| videoElement.buffered 잔여 재생 시간 확인하기 (0) | 2024.05.09 |